写在前面

- 因离线环境为arm64环境,且不好复制相应信息,这边docker环境部署及之前的相应内容均在X86上演示。arm64的相应命令也会同步贴出,请注意辨别。

系统环境

openEuler-22.03-LTS-x86_64

Authorized users only. All activities may be monitored and reported.

Last login: Mon Jul 28 17:35:39 2025

Welcome to 5.10.0-60.18.0.50.oe2203.x86_64

System information as of time: 2025年 07月 28日 星期一 17:36:17 CST

System load: 0.08

Processes: 239

Memory used: 7.9%

Swap used: 0%

Usage On: 3%

IP address: 192.168.229.131

Users online: 2安装基础软件包

1.更改国内源

sudo cp /etc/yum.repos.d/openEuler.repo /etc/yum.repos.d/openEuler.repo.bak

# 备份原有软件源配置文件

sudo vim /etc/yum.repos.d/openEuler.repo

# 编辑软件源配置文件将文件中的原有仓库地址替换为国内镜像源,例如替换为华为云镜像源

[OS]

name=OS

baseurl=https://repo.huaweicloud.com/openeuler/openEuler-22.03-LTS/OS/$basearch/

enabled=1

gpgcheck=1

gpgkey=https://repo.huaweicloud.com/openeuler/openEuler-22.03-LTS/OS/$basearch/RPM-GPG-KEY-openEuler

[everything]

name=everything

baseurl=https://repo.huaweicloud.com/openeuler/openEuler-22.03-LTS/everything/$basearch/

enabled=1

gpgcheck=1

gpgkey=https://repo.huaweicloud.com/openeuler/openEuler-22.03-LTS/everything/$basearch/RPM-GPG-KEY-openEuler

[EPOL]

name=EPOL

baseurl=https://repo.huaweicloud.com/openeuler/openEuler-22.03-LTS/EPOL/$basearch/

enabled=1

gpgcheck=1

gpgkey=https://repo.huaweicloud.com/openeuler/openEuler-22.03-LTS/OS/$basearch/RPM-GPG-KEY-openEuler清除并生成缓存

sudo dnf clean all

sudo dnf makecache源地址:https://forum.openeuler.org/t/topic/768

2.安装必要工具链

yum install tar wget curl vim nano -y安装docker

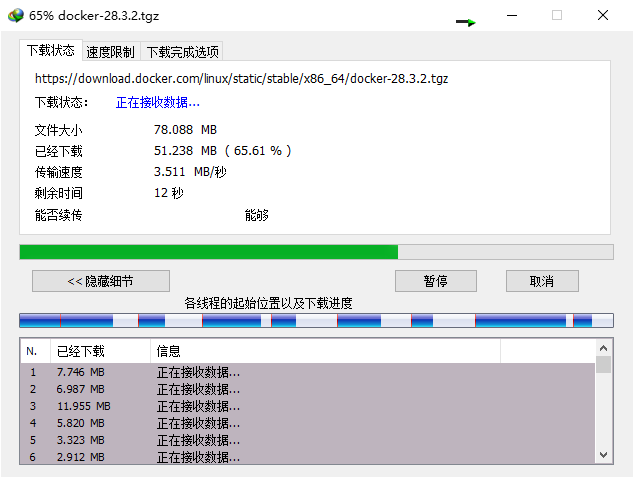

在其他能联网的机器上准备对应包

演示机使用:X86:docker-28.3.2.tgz

离线机使用:ARM64:docker-28.3.2.tgz

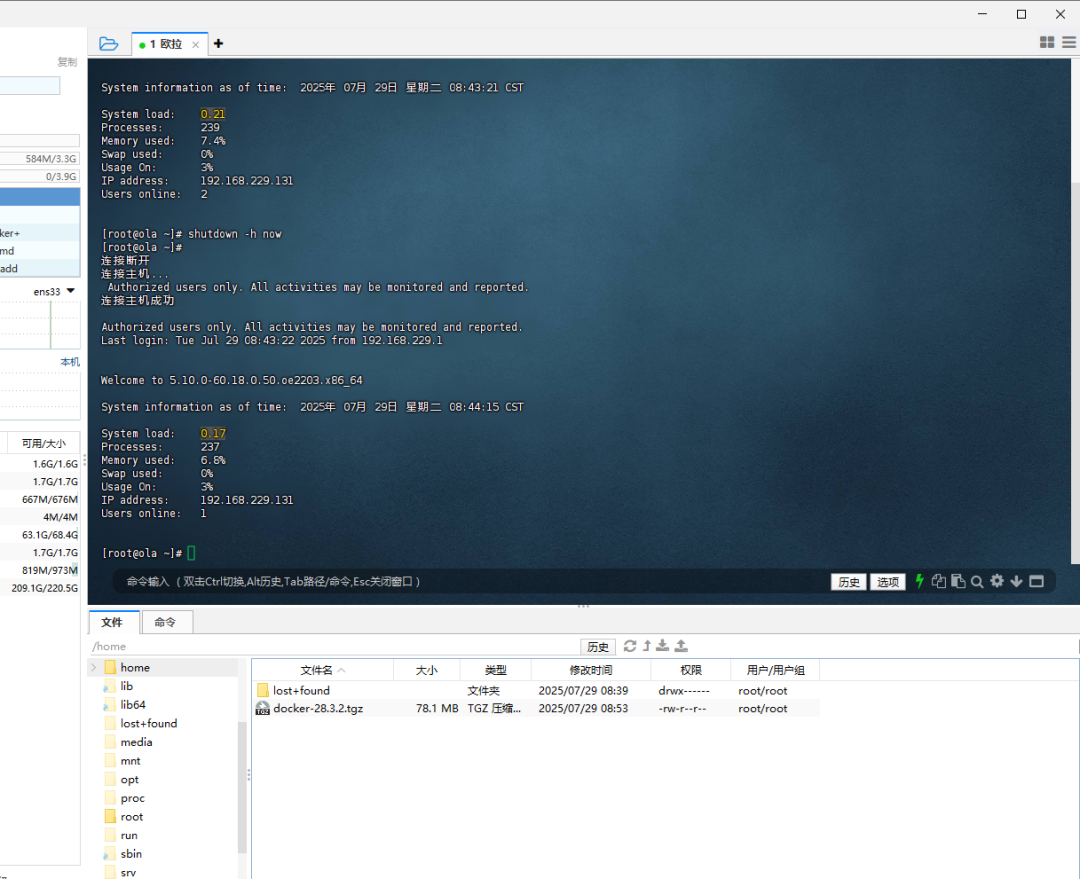

- 上传到对应目录

cd /home/

tar -zxvf docker-28.3.2.tgz

# 解压

chmod -R +x docker

# 递归赋予可执行权限

cp docker/* /usr/bin

# 拷贝文件到系统目录编写docker服务文件

vim /etc/systemd/system/docker.service[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/bin/dockerd

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.targetchmod +x /etc/systemd/system/docker.service

# 赋执行权限

systemctl daemon-reload

# 重新加载配置文件

systemctl enable docker.service

# 开机启动

systemctl start docker

# 启动docker

systemctl status docker

# docker状态

systemctl restart docker

# 重启docker服务配置加速地址并更改存储路径

mkdir -p /etc/docker /home/docker

vim /etc/docker/daemon.json填写加速地址以及存储

{

"data-root": "/home/docker",

"registry-mirrors": ["https://docker.m.daocloud.io", "https://docker.imgdb.de", "https://docker-0.unsee.tech", "https://docker.hlmirror.com", "https://cjie.eu.org"]

}sudo systemctl daemon-reload

# 重新加载配置

sudo systemctl restart docker

# 重启 Docker 服务安装docker-compose

演示机使用:

X86:docker-compose-linux-x86_64

离线机使用:

ARM64:docker-compose-linux-aarch64

- 上传到对应目录

x86:

# 复制到系统目录

cp docker-compose-linux-x86_64 /usr/local/bin/docker-compose

# 赋予执行权限

chmod +x /usr/local/bin/docker-compose

# 验证安装

docker-compose --versionarm64:

# 复制到系统目录

cp docker-compose-linux-aarch64 /usr/local/bin/docker-compose

# 赋予执行权限

chmod +x /usr/local/bin/docker-compose

# 验证安装

docker-compose --version输出内容:

[root@ola home]# docker-compose --version

Docker Compose version v2.39.1镜像部署

镜像获取

mindie_2.0.T17.B010-800I-A2-py3.11-openeuler24.03-lts-aarch64.tar.gz

- PS:以下均在arm架构实体机上操作

镜像导入

docker load -i mindie_2.0.T17.B010-800I-A2-py3.11-openeuler24.03-lts-aarch64.tar.gz驱动获取

驱动安装

因为我这边离线机器都是安装好了的,这里略过,网上一大堆教程的。

驱动校验

在宿主机上执行

npu-smi info看到以下信息即可:

npu-smi info

+------------------------------------------------------------------------------------------------+

| npu-smi 24.1.0.3 Version: 24.1.0.3 |

+---------------------------+---------------+----------------------------------------------------+

| NPU Name | Health | Power(W) Temp(C) Hugepages-Usage(page)|

| Chip | Bus-Id | AICore(%) Memory-Usage(MB) HBM-Usage(MB) |

+===========================+===============+====================================================+

| 0 910B2 | OK | 101.0 40 0 / 0 |

| 0 | 0000:C1:00.0 | 0 0 / 0 5037 / 65536 |

.......准备transformers-4.51.0

transformers-4.51.0

然后上传到指定宿主机目录(自行解压),我这里以/apps/mindie/为例

模型准备

模型拉取参阅:乌班图安装pipx以及配置魔塔终端工具

配置准备

- 注意事项:

ipAddress如果是0.0.0.0需要allowAllZeroIpListening=true

worldSize要和npuDeviceIds匹配。他们用来控制多卡并行推理。npuDeviceIds通常指的是逻辑卡(不管怎么挂卡都是0,1,2...这样的id)。但是如果你的容器是--privileged的话全部卡都会挂进来,那它就得指实体卡id的。

- 如果没证书

httpsEnabled要关上

- 如果没证书

- 4.

maxPrefillTokens >= maxSeqLen > maxInputTokenLen

示例:"maxSeqLen" : 20480,"maxInputTokenLen" : 16384,"maxPrefillTokens" : 32678,"maxIterTimes" : 32768, maxIterTimes会限制迭代次数。如果很小,可能模型会戛然而止。如果不想特别设置最好和maxSeqLen一致

- 以下是我能正常启动的

config.json配置文件:

{

"Version" : "1.0.0",

"ServerConfig" :

{

"ipAddress" : "0.0.0.0",

"managementIpAddress" : "127.0.0.2",

"port" : 1025,

"managementPort" : 1026,

"metricsPort" : 1027,

"allowAllZeroIpListening" : true,

"maxLinkNum" : 1000,

"httpsEnabled" : false,

"fullTextEnabled" : false,

"tlsCaPath" : "security/ca/",

"tlsCaFile" : ["ca.pem"],

"tlsCert" : "security/certs/server.pem",

"tlsPk" : "security/keys/server.key.pem",

"tlsPkPwd" : "security/pass/key_pwd.txt",

"tlsCrlPath" : "security/certs/",

"tlsCrlFiles" : ["server_crl.pem"],

"managementTlsCaFile" : ["management_ca.pem"],

"managementTlsCert" : "security/certs/management/server.pem",

"managementTlsPk" : "security/keys/management/server.key.pem",

"managementTlsPkPwd" : "security/pass/management/key_pwd.txt",

"managementTlsCrlPath" : "security/management/certs/",

"managementTlsCrlFiles" : ["server_crl.pem"],

"kmcKsfMaster" : "tools/pmt/master/ksfa",

"kmcKsfStandby" : "tools/pmt/standby/ksfb",

"inferMode" : "standard",

"interCommTLSEnabled" : true,

"interCommPort" : 1121,

"interCommTlsCaPath" : "security/grpc/ca/",

"interCommTlsCaFiles" : ["ca.pem"],

"interCommTlsCert" : "security/grpc/certs/server.pem",

"interCommPk" : "security/grpc/keys/server.key.pem",

"interCommPkPwd" : "security/grpc/pass/key_pwd.txt",

"interCommTlsCrlPath" : "security/grpc/certs/",

"interCommTlsCrlFiles" : ["server_crl.pem"],

"openAiSupport" : "vllm",

"tokenTimeout" : 600,

"e2eTimeout" : 600,

"distDPServerEnabled":false

},

"BackendConfig" : {

"backendName" : "mindieservice_llm_engine",

"modelInstanceNumber" : 1,

"npuDeviceIds" : [[0,1]],

"tokenizerProcessNumber" : 8,

"multiNodesInferEnabled" : false,

"multiNodesInferPort" : 1120,

"interNodeTLSEnabled" : true,

"interNodeTlsCaPath" : "security/grpc/ca/",

"interNodeTlsCaFiles" : ["ca.pem"],

"interNodeTlsCert" : "security/grpc/certs/server.pem",

"interNodeTlsPk" : "security/grpc/keys/server.key.pem",

"interNodeTlsPkPwd" : "security/grpc/pass/mindie_server_key_pwd.txt",

"interNodeTlsCrlPath" : "security/grpc/certs/",

"interNodeTlsCrlFiles" : ["server_crl.pem"],

"interNodeKmcKsfMaster" : "tools/pmt/master/ksfa",

"interNodeKmcKsfStandby" : "tools/pmt/standby/ksfb",

"ModelDeployConfig" :

{

"maxSeqLen" : 2560,

"maxInputTokenLen" : 2048,

"truncation" : false,

"ModelConfig" : [

{

"modelInstanceType" : "Standard",

"modelName" : "Qwen3-32B",

"modelWeightPath" : "/data/Qwen3-32B",

"worldSize" : 2,

"cpuMemSize" : 5,

"npuMemSize" : -1,

"backendType" : "atb",

"trustRemoteCode" : false

}

]

},

"ScheduleConfig" :

{

"templateType" : "Standard",

"templateName" : "Standard_LLM",

"cacheBlockSize" : 128,

"maxPrefillBatchSize" : 50,

"maxPrefillTokens" : 8192,

"prefillTimeMsPerReq" : 150,

"prefillPolicyType" : 0,

"decodeTimeMsPerReq" : 50,

"decodePolicyType" : 0,

"maxBatchSize" : 200,

"maxIterTimes" : 512,

"maxPreemptCount" : 0,

"supportSelectBatch" : false,

"maxQueueDelayMicroseconds" : 5000

}

}

}容器创建

以两块算力卡为例

docker run -it -d --shm-size=128g \

--privileged \

--name mindie-Qwen3-32B \

--device=/dev/davinci_manager \

--device=/dev/hisi_hdc \

--device=/dev/devmm_svm \

--device=/dev/davinci0 \

--device=/dev/davinci1 \

--net=host \

-v /usr/local/Ascend/driver:/usr/local/Ascend/driver:ro \

-v /usr/local/sbin:/usr/local/sbin:ro \

-v /apps/sharedstorage/Qwen3-32B:/data/Qwen3-32B:ro \

-v /apps/mindie/transformers-4.51.0/:/root/mindie2/transformers-4.51.0:ro \

-v /apps/mindie/config/config.json:/usr/local/Ascend/mindie/latest/mindie-service/conf/config.json:ro \

mindie:2.0.T17.B010-800I-A2-py3.11-openeuler24.03-lts-aarch64 bash进入容器

docker exec -it mindie-Qwen3-32B bash

cd /root/mindie2/transformers-4.51.0/

pip install --no-index --find-links=. transformers==4.51.0

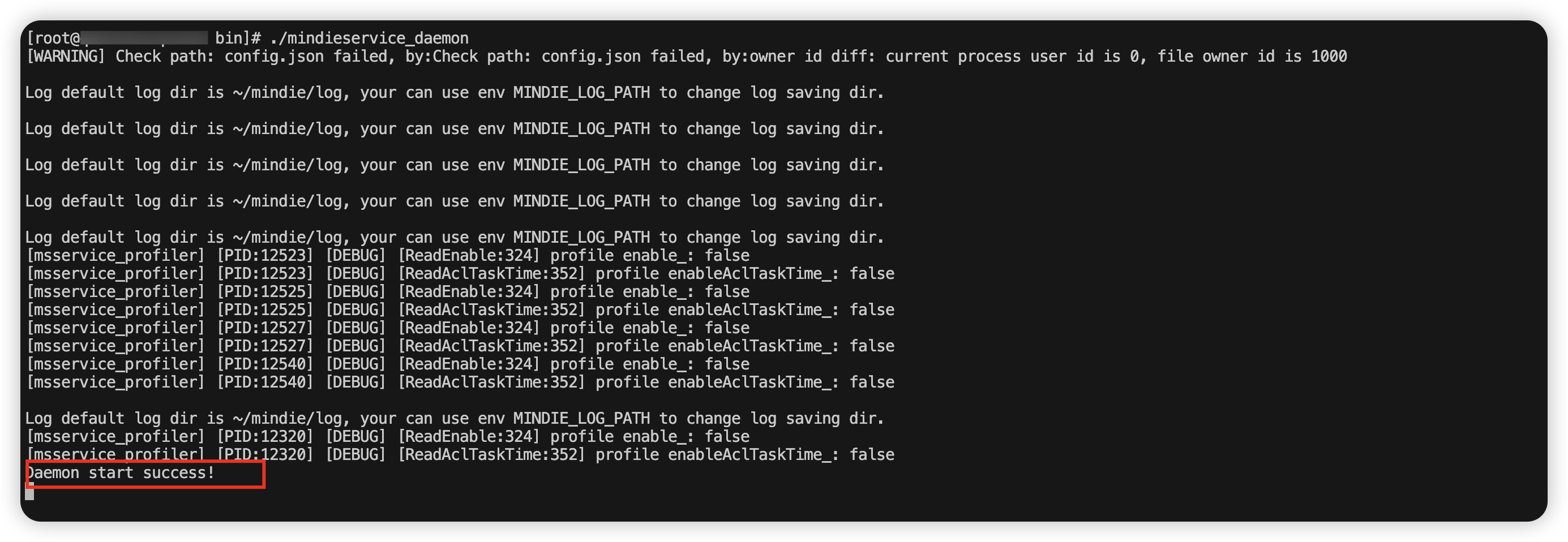

# 升级transformers启动模型

# 进入启动路径

cd /usr/local/Ascend/mindie/latest/mindie-service/bin/

# 启动推理服务

./mindieservice_daemon见到以下内容为启动成功:

环境调用测试

curl "http://127.0.0.1:1025/v1/chat/completions" -H "Content-Type: application/json" \

-H "Authorization: Bearer xxx" \

-d '{

"model": "Qwen3-32B",

"stream": true,

"messages": [

{

"role": "user",

"content": "/no_think 你好."

}

]

}'Oneapi部署封装接口

获取镜像

镜像拉取及导入

docker pull --platform linux/arm64 justsong/one-api:v0.6.10

docker save justsong/one-api:v0.6.10 -o one-api:v0.6.10.tar

# 以上在X86中运行拉取

docker load -i one-apiv0.6.10.tar

# 在arm64中导入创建docker-compose.yaml

services:

one-api:

image: ghcr.io/songquanpeng/one-api:v0.6.10

container_name: one-api

restart: always

ports:

- "3000:3000"

environment:

- TZ=Asia/Shanghai

- TIKTOKEN_CACHE_DIR=/data/cache

volumes:

- ./data:/data

- ./cache:/data/cache容器启动

docker-compose up -dOnerapi面板

web访问:http://IP:3000/

默认的登录信息:

- 账号:root

- 密码:123456

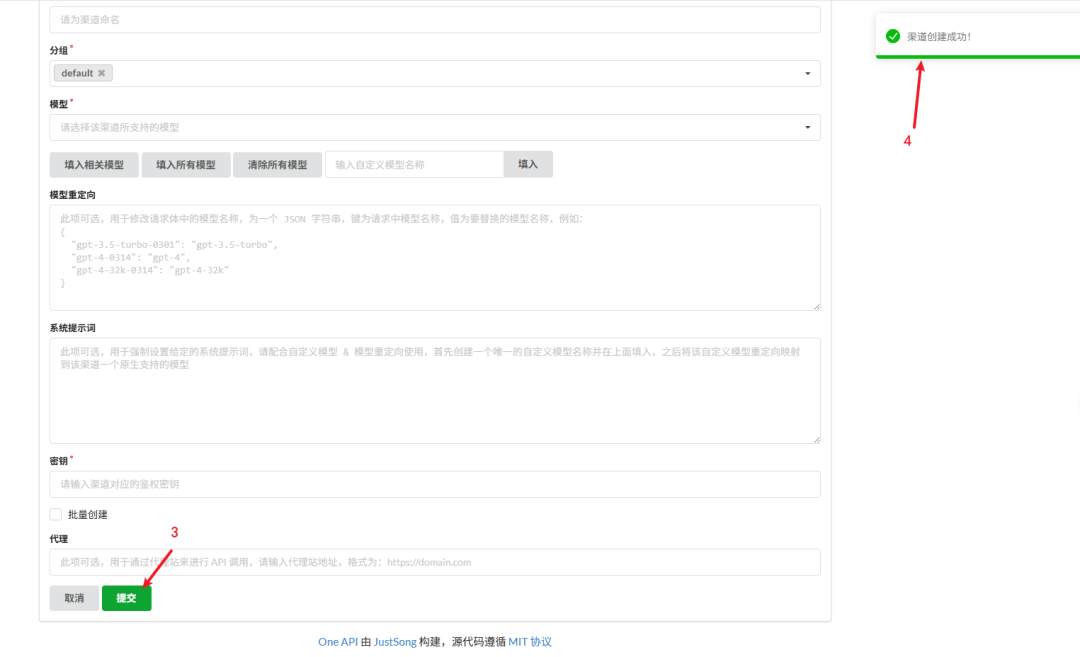

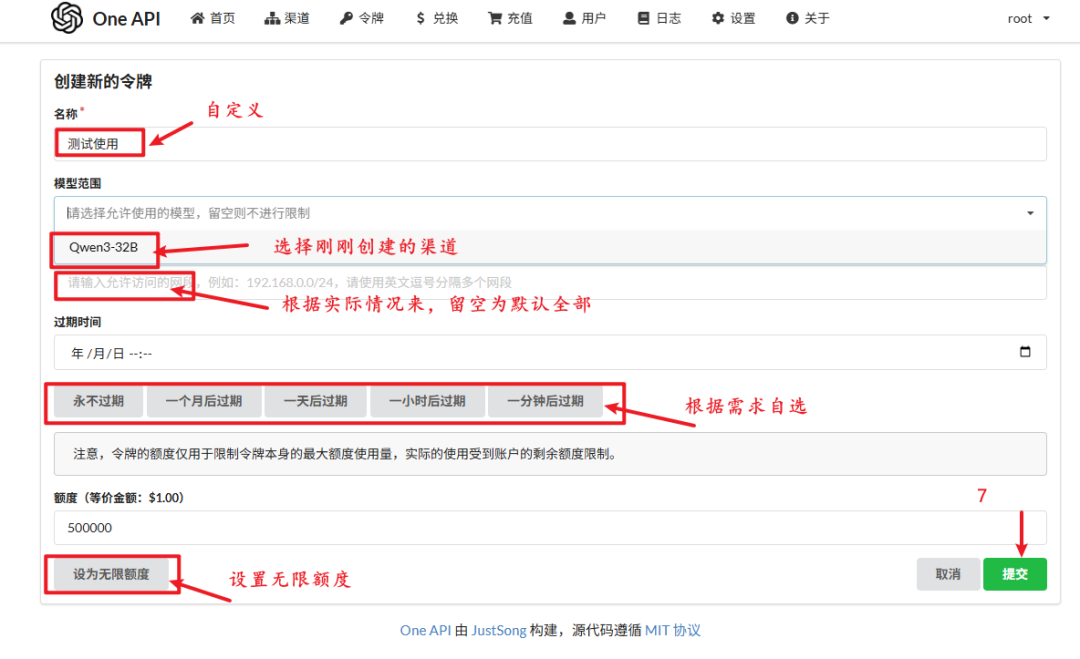

Onerapi配置

按照以下图例配置

- PS:这里实际上的端口号应该是之前截图的1025,截图的时候没改过来

Onerapi接口测试

curl "http://127.0.0.1:3000/v1/chat/completions" -H "Content-Type: application/json" \

-H "Authorization: Bearer sk-8di5qDTX0DHJETY3Ab7fBdD4424782A26ERH41FBH1Dd" \

-d '{

"model": "Qwen3-32B",

"stream": true,

"messages": [

{

"role": "user",

"content": "/no_think 你好."

}

]

}'正常情况下就能通了

搭建远程下载环境

tar -xzf python3.9-arm64.tar.gz

# 这里使用的是自己预编译的python3.9

cd python-aarch64/bin

./python3.9 -m http.server 8888

其他问题解决

1.oneapi添加多个不同来源相同同模型的时候区分办法

2.dify添加oneapi封装后的接口报错网络错误解决

现象描述:oneapi的端口映射到了宿主机8000,dify启动后通过web添加oneapi添加了key封装后模型的时候报错 internet error,查询且实践后得到解决方案,特此记录。

# 步骤 1:创建一个自定义网络(例如叫 dify-oneapi)

docker network create dify-oneapi

# 步骤 2:把 one-api 加入到该网络(无需重建容器)

docker network connect dify-oneapi one-api

# 步骤 3:把 需要加入的容器也接入同一个网络

#先查看 需要加入的的容器名(命令:docker ps),然后执行:

docker network connect dify-oneapi jw-nginx-1

docker network connect dify-oneapi jw-worker_beat-1

docker network connect dify-oneapi jw-plugin_daemon-1

docker network connect dify-oneapi jw-worker-1

docker network connect dify-oneapi jw-api-1

docker network connect dify-oneapi jw-web-1

docker network connect dify-oneapi jw-db-1

docker network connect dify-oneapi jw-sandbox-1

docker network connect dify-oneapi jw-redis-1

docker network connect dify-oneapi jw-ssrf_proxy-1

docker network connect dify-oneapi jw-weaviate-1

docker network connect dify-oneapi one-api

docker network connect dify-oneapi docker-nginx-1

docker network connect dify-oneapi docker-api-1

docker network connect dify-oneapi docker-worker_beat-1

docker network connect dify-oneapi docker-worker-1

docker network connect dify-oneapi docker-plugin_daemon-1

docker network connect dify-oneapi docker-sandbox-1

docker network connect dify-oneapi docker-web-1

docker network connect dify-oneapi docker-db-1

docker network connect dify-oneapi docker-redis-1

docker network connect dify-oneapi docker-ssrf_proxy-1

docker network connect dify-oneapi docker-weaviate-1

# 步骤 4:在 Dify 中添加插件地址改为:

http://one-api:3000/v1

# 注意此时用的是 容器名 one-api,Docker 会自动通过 DNS 解析它。3000为oneapi容器内部端口参上处理后,问题解决。

3.创建独立的dify容器

docker compose -p xx up -d4.dify重置管理员邮箱

docker exec -it docker-api-1 flask reset-email然后输入旧的邮箱和新的邮箱即可